Hire Hadoop Developers

Build powerful and flexible big data solutions with our expert Hadoop developers. Our team uses the latest Hadoop ecosystem tools, such as HDFS, MapReduce, Hive, and Spark, to efficiently process and analyze massive datasets. With Linkitsoft, you get access to pre-vetted Hadoop experts who can handle your data pipelines, optimize performance, and deliver insights that drive real business results.

Let's Start a Project

Our Hadoop Development Expertise

Work with expert Hadoop developers to turn your big data into real business results. Our team builds scalable systems for data storage, processing, and analytics that help you make faster decisions, boost efficiency, and grow your business.

Hadoop Consultation

Not sure where to start with your big data project? Our experts provide professional Hadoop consultation to understand your business needs, suggest the best architecture, and plan the right tools for your data workflows.

Hadoop Implementation

We set up distributed batch processing systems to handle large datasets efficiently. Our developers ensure your Hadoop environment is robust, scalable, and ready for future growth.

Hadoop Integration

We integrate Hadoop with your existing systems to enable smooth data flow. This makes sure that your applications, databases, and analytics tools work together without delays or errors.

Hadoop Configuration & Optimization

Our team fine-tunes Hadoop clusters and job parameters to maximize performance. Optimized systems lessen processing time, save resources, and improve overall efficiency.

Data Mining & Aggregation

We extract valuable insights from raw data by applying smart aggregation and mining techniques. Clean and structured data helps your team make informed, data-driven decisions.

Business Intelligence & Analytics

We connect Hadoop data with BI tools to make dashboards, reports, and analytics workflows. This enables your business to track trends, monitor KPIs, and gain actionable insights quickly.

Engagement & Hiring Models

Every Hadoop project is unique. That’s why Linkitsoft offers flexible hiring options. This way, you pay only for the support you need.

Dedicated Hadoop Developers

For long-term or complex Hadoop projects, a dedicated team is the best choice. They focus solely on your application, understand your architecture, and work closely with you to build the right backend, microservices, or APIs.

Fixed-Cost Model

If your Hadoop project has a clear plan and scope, the fixed-cost model works best. We agree on the budget and timeline upfront, so you always know what to expect.

Hourly / On-Demand Hiring

For short-term or changing requirements, you can hire Hadoop developers by the hour. This is ideal for feature updates, performance optimizations, API enhancements, bug fixes, or small improvements. You pay only for the time used.

Our Case Studies

Explore real projects where our ideas, strategy, and technology deliver measurable results.

Mobile App

Foodosti - Food Delivery Application

Foodosti is a food delivery startup in Kentucky that wanted to give restaurants and customers an inexpensive and smarter alternative to expensive apps like DoorDash and Uber Eats. We helped turn their idea into a real app with a driver bidding system, where riders set their own delivery prices. The app launched in Lexington, Kentucky, and quickly became a hit with both customers and delivery drivers.

Mobile App

Fitalike - Fitness & Wellness App

Fitalike, a fitness and wellness platform, struggled with poor usability, high subscription costs, and limited reach due to the absence of an Android app. Linkitsoft transformed the idea into a complete cross-platform fitness solution by upgrading the iOS app, building a full-featured Android app, and developing a powerful admin panel. The app made it easy for users to discover certified trainers, chat in real time, make secure payments, and manage subscriptions seamlessly. With smarter onboarding and centralized admin control, Fitalike improved user engagement, built trust between trainers and clients, and created a reliable fitness experience across devices.

Kiosk App

Mr. Cod (Order Wave – Self-Ordering Kiosk)

Mr. Cod, a popular UK-based restaurant known for its fish and chips, faced challenges managing high customer volume and daily tax tracking. Linkitsoft introduced Order Wave, a self-service kiosk that simplified ordering, enabled custom order saving via phone login, and automated tax collection using a Black Box system. This solution streamlined operations, reduced order errors, and provided efficient daily reporting, significantly improving both customer experience and backend management.

Vending App

BVEND - Smart Vending Machine Application

BVEND, a school-focused vending operator, wanted to create a secure and cashless snacking experience for students. Traditional cash systems were inconvenient and hard to manage for both kids and parents. Linkitsoft built a custom web-based platform that used student ID cards for payments, enabled parental top-ups, and added gamified features for engagement. The system simplified management, boosted user satisfaction, and made vending fun, safe, and efficient for schools.

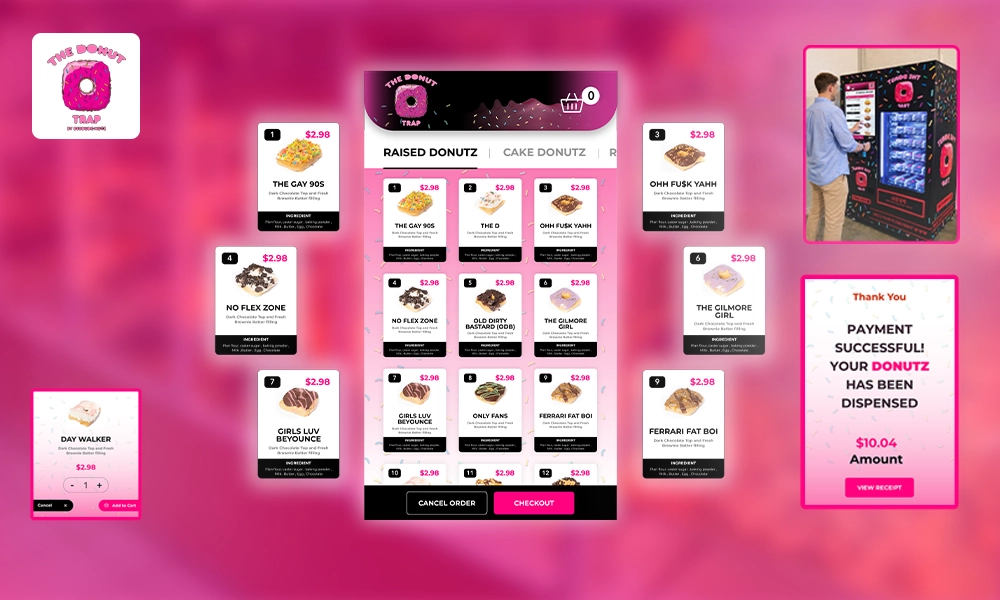

Vending App

DONUT TRAP - Smart Donut Vending Application

Donut Trap, a small donut and coffee business, faced challenges managing inventory, payments, and custom orders manually. Linkitsoft developed a responsive mobile app that automated inventory updates, streamlined payments, and enabled customers to place customized orders easily. The app also offered real-time tracking and remote management, reducing manual work and errors. With automation and a smooth digital experience, Donut Trap boosted efficiency and customer satisfaction while saving valuable time.

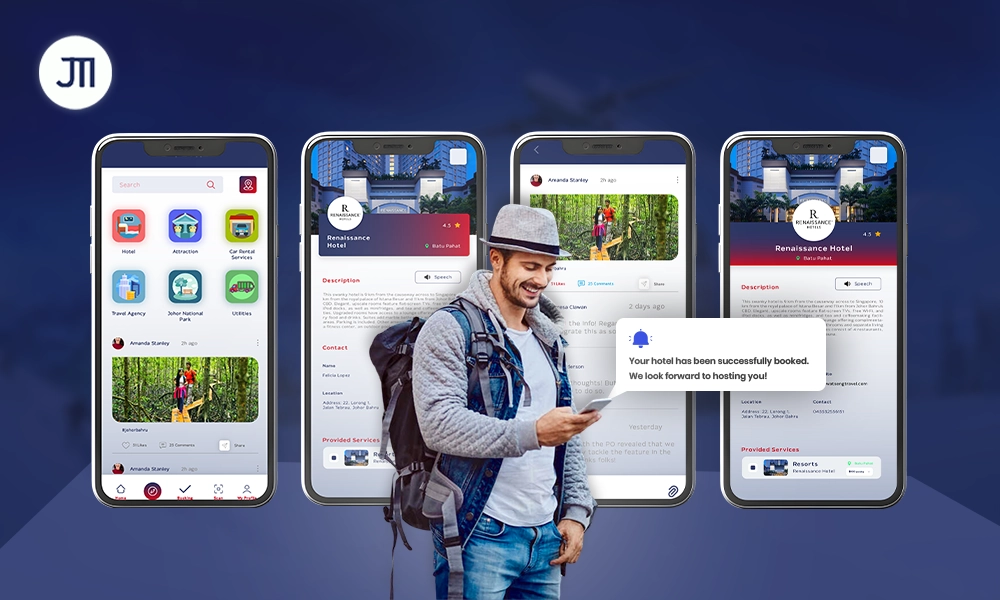

Mobile App

JTI - Modern Tourism Application

JTI, a tourism initiative in Malaysia, faced challenges as travelers struggled with scattered apps for booking, navigation, and recommendations. Linkitsoft developed a centralized mobile app that unified hotel bookings, attractions, transport, and personalized suggestions in one platform. The app also promoted local businesses through in-app advertising. This solution simplified trip planning, improved user experience, and boosted tourism engagement across Johor Bahru, making travel more connected and enjoyable.

Vending App

Uvendtech - Smart User Centric Vending App

UvendTech, a Malaysian vending operator, struggled with pre-installed software that lacked local payment support, backend integration, and flexibility. Linkitsoft developed a custom vending platform tailored for Malaysia, adding e-wallet payments, Malay language support, and real-time data integration. A centralized dashboard enabled remote management and brand customization. This transformed UvendTech’s machines into a fully localized, scalable, and efficient system that improved operations and enhanced customer convenience nationwide.

Vending Software

Showdrop - Custom Vending Software

Showdrop, a marketing tech company, wanted to modernize product sampling in grocery stores. Traditional sampling methods were inefficient and hard to measure. Linkitsoft developed custom vending software with QR-based access, offline functionality, and real-time temperature monitoring. The branded interface made sampling interactive and engaging, while backend tracking ensured smooth operations. This solution transformed sampling into a smart, data-driven experience that enhanced brand visibility and customer engagement in retail spaces.

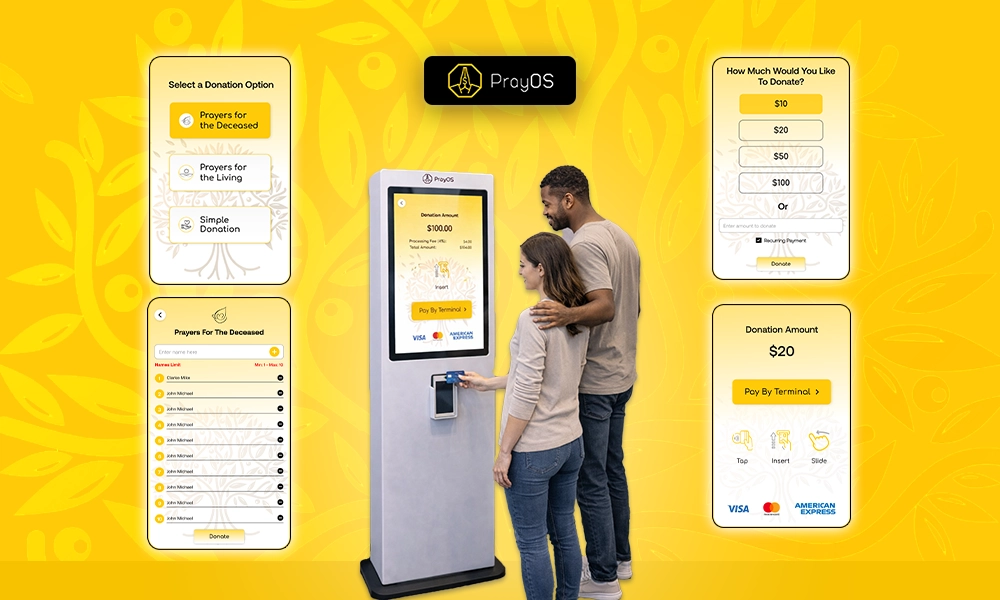

Kiosk App

PrayOS Kiosk App - Donation Made Easy

PrayOS, a faith-based organization, wanted to help people share prayers and support their community in a secure, modern way. Traditional methods lacked accessibility and personalization. Linkitsoft developed a kiosk system where users can submit prayers, make donations, and receive guidance from religious leaders. Built on AWS for reliability and security, the solution strengthened community connections, improved transparency, and made spiritual engagement more accessible and meaningful for everyone.

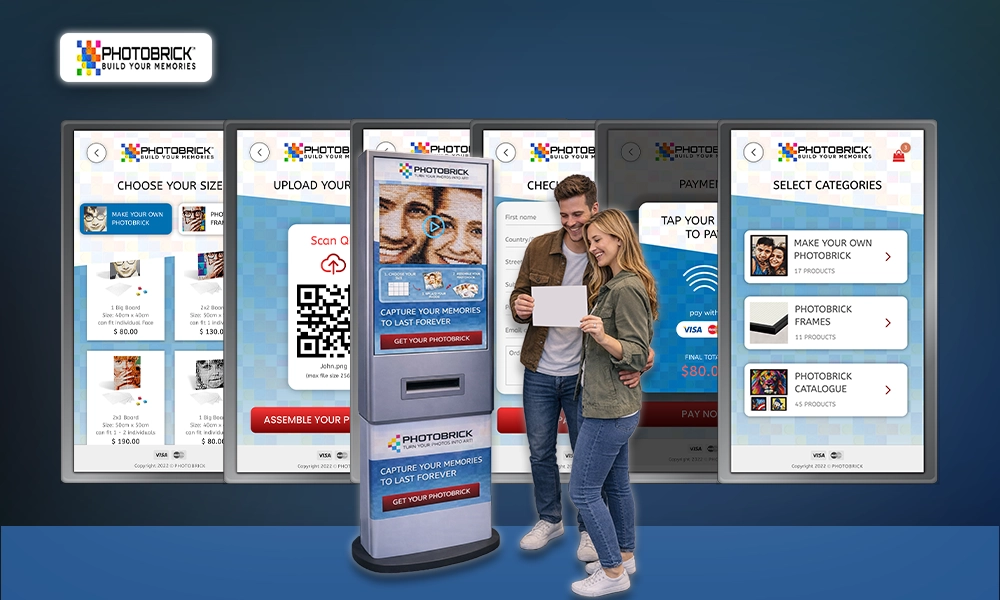

Kiosk App

Photobrick - Photo Recreation Kiosk Application

Photobrick, a personalized gift brand, wanted to make memory preservation more interactive and lasting. Traditional photo printing lacked engagement and customization. Linkitsoft developed an interactive kiosk system that lets users upload photos via a QR-linked web app, preview designs in real time, and complete secure contactless payments. This seamless experience enhanced customer engagement, streamlined operations, and helped Photobrick deliver a creative, modern, and personalized way to capture meaningful memories.

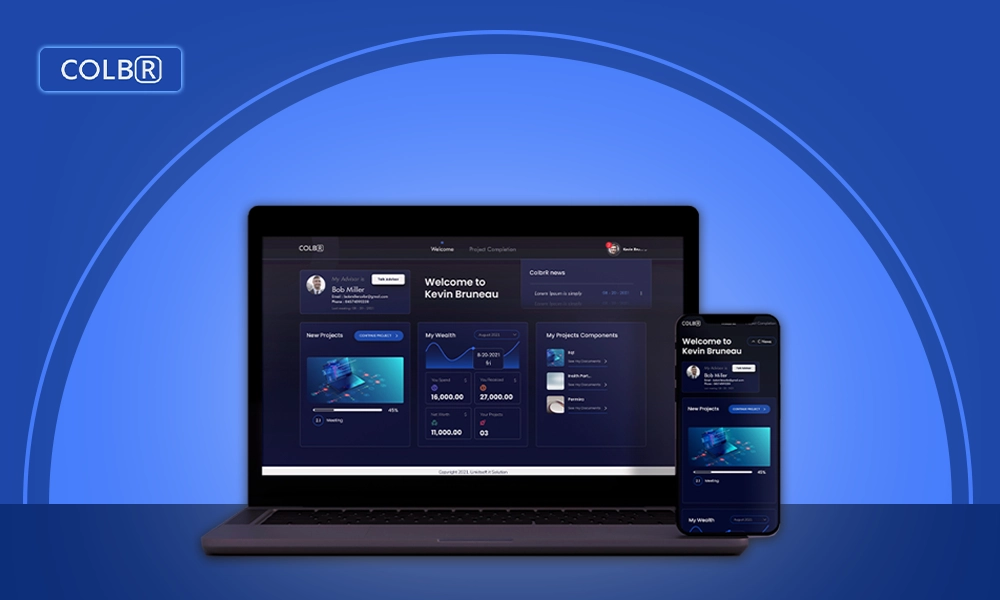

Web App

COLBR - Secure Investing for everyday

COLBR, a digital investment platform, faced challenges with complex onboarding and scattered client-advisor communication. Linkitsoft built a secure web platform with dedicated portals for customers and advisors, enabling easy document uploads, validation, meeting scheduling, and progress tracking. By centralizing everything into one streamlined system, the solution reduced delays, eliminated manual errors, and made financial management simpler, faster, and more transparent for both customers and advisors.

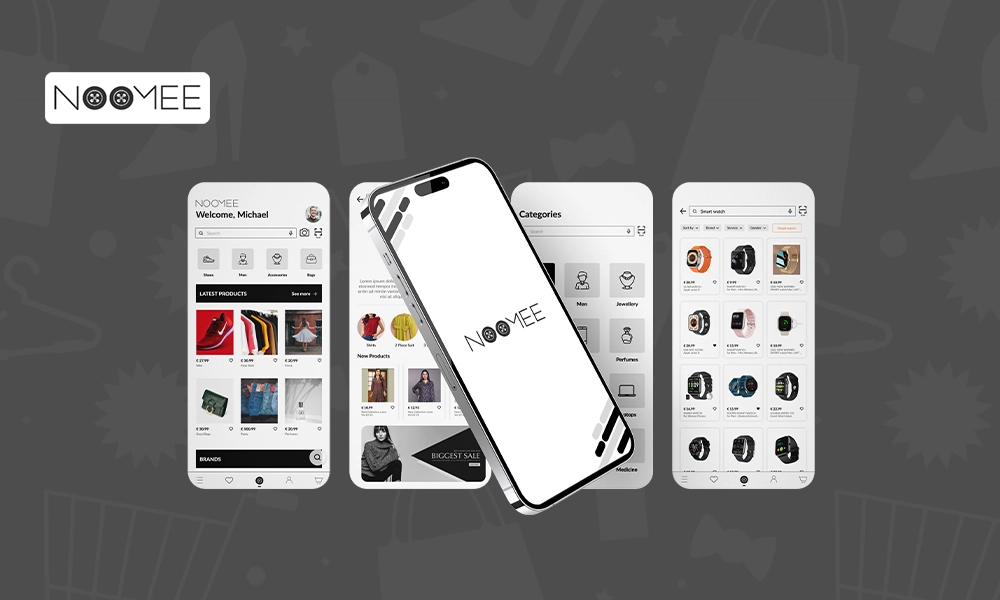

Mobile App

Noomee - Ecommerce Mobile app

Noomee, an Italian e-commerce startup, wanted to simplify online shopping as users faced slow checkouts and poor product search experiences. Linkitsoft built a cross-platform mobile app with a secure, minimal-step payment process and an advanced image-based search feature. With organized product categories and a clean interface, the app made shopping faster, safer, and more intuitive, enhancing user satisfaction and confidence in online purchasing.

Kiosk App

Jood - Donation Kiosk Application

Jood, a digital donation platform in Saudi Arabia, wanted to make charitable giving easier, faster, and more transparent. Donors previously faced difficulty tracking contributions and trusting where funds went. Linkitsoft built a bilingual, secure kiosk and web system with real-time tracking, encrypted payments, and franchise management. The platform unified charities under one network, ensured instant transfers, and transformed donations into a seamless, trustworthy, and accessible experience for everyone.

Kiosk App

Texas Haunters Convention - Badge Printing Kiosk

Texas Haunters Convention needed a faster way to handle event check-ins as manual badge printing caused long lines and delays. Linkitsoft developed a custom self-service kiosk connected to the registration database, allowing attendees to scan QR codes or search by email to print badges instantly. The system improved efficiency, reduced staff workload, and delivered a smooth, professional, and hassle-free check-in experience for thousands of event participants.

Vending Software

Vendy - Vending Machine Application

Vendy, a smart vending software company, faced challenges with outdated cash-based machines that lacked safety and real-time management. Linkitsoft developed a contactless vending platform that allowed users to scan QR codes, browse products, and pay digitally. The solution included real-time inventory tracking, secure payments, and a centralized dashboard for retailers. This innovation modernized vending operations, improved hygiene, and delivered a faster, more reliable shopping experience for users.

Kiosk Software

Xavier College - Self-Service Attendance Kiosk

Xavier College in Australia needed a faster and more reliable system for recording student late arrivals as manual check-ins were slow and error-prone. Linkitsoft developed a self-service attendance kiosk integrated with Microsoft Dynamics CRM. Students can scan their ID, take a photo for verification, and print a confirmation slip instantly. The solution automated recordkeeping, reduced administrative workload, and improved accuracy, creating a seamless and efficient check-in process.

Unified System

Beauty Lab - Custom Digital Booking System

Beauty Lab, a modern salon, struggled with a disorganized booking and payment process that frustrated clients and caused scheduling delays. Linkitsoft developed a unified digital system integrating online booking, a self-check-in kiosk, and a specialist app. The platform enabled real time scheduling, NFC-enabled payments, and seamless synchronization across all devices. This solution simplified operations, improved customer satisfaction, and turned salon management into a smooth, modern, and efficient experience.

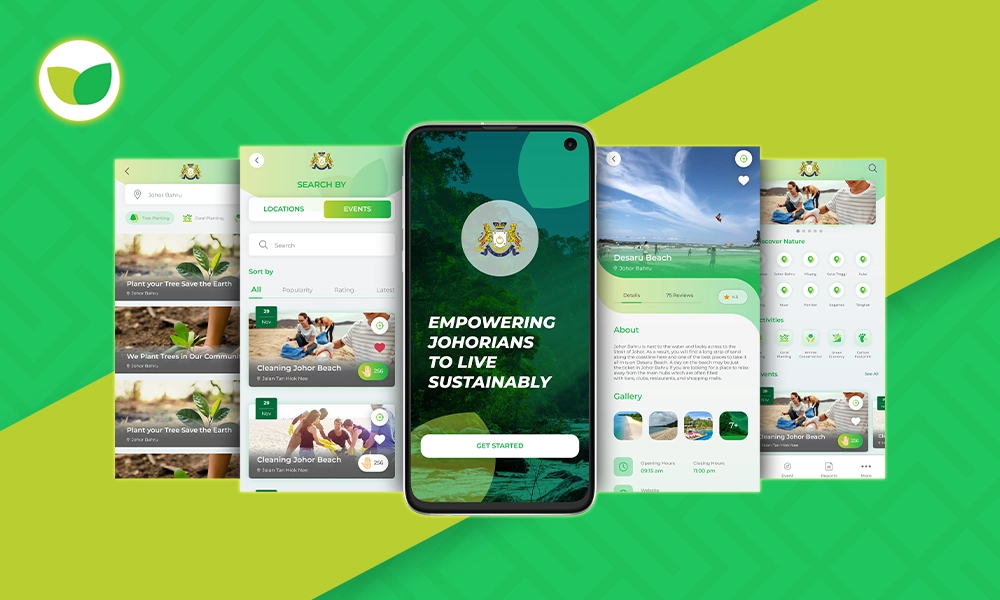

Mobile App

Johor Environmental System - Sustainability-Focused Mobile App

Johor Environmental System is a sustainability-focused mobile app developed by Linkitsoft. It empowers the Johorians in their eco-conscious journey. The client’s complaint? Environmental problems. So we built a platform that’s packed with tips, resources, and tools. It helps users reduce waste, save energy, and discover eco-friendly products. We made a solution designed to promote sustainable living while supporting local green initiatives.

Technologies & Tools Our Hadoop Developers Use

Our Hadoop developers use proven tools and technologies from the Hadoop ecosystem to build scalable and reliable data solutions. Each tool is selected to handle large datasets efficiently, improve processing speed, and support your business goals.

HDFS

HDFS is used for distributed data storage across multiple nodes. It allows us to store and manage large datasets reliably.

YARN

YARN helps manage cluster resources and ensures efficient execution of data processing tasks. It keeps your Hadoop environment stable and well-organized.

Hadoop MapReduce

MapReduce is used for batch data processing. It allows us to process large volumes of data quickly across distributed systems.

Java

Java is the core language for Hadoop development, which is used to build stable and high-performance data processing apps.

Python

Used for scripting, automation, and data analysis. It helps speed up development and simplifies complex data tasks.

R

Used for statistical analysis and data modeling. It helps in extracting insights from large datasets.

Apache Mahout

Mahout provides scalable machine learning algorithms that work well with Hadoop data.

TensorFlow

Used to develop and train machine learning models on large datasets.

Apache MXNet

Supports deep learning and is optimized for handling big data workloads efficiently.

Apache HBase

HBase is a NoSQL database that handles real-time read and write operations on large datasets.

Apache Cassandra

Cassandra is used for distributed data storage with high availability and scalability.

MongoDB

MongoDB manages flexible and unstructured data, making it useful for logs and analytics.

Talend

Helps with data integration, quality, and governance across different systems.

Apache Airflow

Airflow is used to schedule and manage data workflows.

Apache ZooKeeper

Used to manage coordination between distributed systems.

AWS Developer Tools

Help to automate build, test, and deployment processes for Hadoop applications.

Prometheus

Used to monitor system performance and track key metrics.

Nagios

Nagios helps monitor infrastructure health and ensures system uptime.

Docker

Used to containerize Hadoop apps for consistent deployment across environments.

Kubernetes

Helps to manage and scale containerized applications efficiently.

Ansible

Automates configuration and deployment, saving much time and reducing manual effort.

Clients We Have Worked With

We have gained a long list of contented clients by delivering top-notch IT solutions.

Hire Hadoop Developers in Six Easy Steps

Linkitsoft makes hiring Hadoop developers simple. Our experienced team helps you get started quickly and handle your big data projects efficiently.

1. Share Your Requirements

Tell us about your Hadoop project through a quick call or by filling a form on our website. Share your data needs, tools, and goals so we can understand your requirements clearly.

2. Talent Matching

We review your requirements and shortlist Hadoop developers with the right experience in tools like HDFS, MapReduce, and Spark. We focus on finding experts who fit your project needs.

3. Interview & Select

You can connect directly with the shortlisted developers. You can choose the ones who match your workflow, communication style, and technical expectations.

4. Smooth Onboarding & Setup

Once selected, we onboard the selected Hadoop developers into your team. We also align on tools, data workflows, and processes, so work starts without any unnecessary delays.

5. Collaborate & Build

Your Hadoop developers begin working on your data pipelines, processing systems, or analytics tasks. You stay updated with clear communication at every step.

6. Ongoing Support

We stay connected throughout the project. You can reach out anytime for support, scaling your team, or improving your data systems.

Awards & Recognition

We thrive on accelerating the path to disruption and implementing agile methodologies to design, build, deliver, and scale digital solutions. Our future-proof, growth-centric tech has earned us notable awards and recognition across industries and regions.

Why Hire Hadoop Developers from Us

Working with Linkitsoft means you get experienced developers who understand your data challenges. Our process is simple, transparent, and focused on helping you get real results from your data.

Access to Pre-Vetted Hadoop Developers

We connect you with Hadoop developers who are tested for their skills and experience. Each developer understands tools like HDFS, MapReduce, and Spark, and can handle large-scale data projects with confidence.

Quick Hiring Process

We help you find and onboard Hadoop developers quickly. Once we understand your data needs, we share a shortlist of experts who are ready to start without delay.

Flexible Hiring Options

Every data project is different. You can hire full-time developers, part-time support, or specialists for specific tasks based on your project scope and budget.

Focus on Data Processing & Performance

Our Hadoop developers build data pipelines and processing systems that handle large volumes efficiently. We focus on clean workflows and reliable performance so your data is always ready when you need it.

Cost-Effective Solutions

We offer skilled Hadoop developers at competitive rates. So, you get quality work that helps you manage and analyze data without overspending.

Clear Data Workflows

Our developers create structured data pipelines and workflows that are easy to manage. This helps your team access, process, and use data without confusion or delays.

Testimonials From Our Clients

Frequently Asked Questions

How quickly will I be able to hire a Hadoop developer?

We keep the process fast and simple so you can get started without delays. In most cases, you can hire a skilled Hadoop developer within 3–7 business days, depending on your project scope and requirements.

Can I interview the Hadoop developers myself?

Yes, you can. We believe in full transparency, so you’re free to interview our Hadoop developers to evaluate their technical expertise, experience with big data tools, and communication skills before making a decision.

What are the typical costs of hiring Hadoop developers from you?

The cost depends on factors like experience level, project complexity, and engagement model (hourly, part-time, or full-time). We offer flexible and competitive pricing, and once we understand your project needs, we provide a clear and detailed quote.

Do your Hadoop developers work in my time zone?

Yes, our Hadoop developers can adjust their working hours to match your time zone. This helps ensure smooth collaboration, faster feedback, and better project alignment.

How do you guarantee quality and communication?

Our Hadoop developers are carefully selected based on their experience with big data ecosystems, including tools like HDFS, MapReduce, and Spark. We follow agile practices, provide regular updates, and use reliable communication tools to ensure your project stays on track and meets quality standards.

What if a Hadoop developer isn’t a good fit?

If the assigned Hadoop developer doesn’t match your expectations, we offer a quick replacement to keep your project moving forward without any disruption.

How do you ensure data security and confidentiality?

Linkitsoft follows strict data security practices to protect your information. This includes NDAs, secure access controls, and safe data handling processes, ensuring your business data remains confidential at all times.

Have a Project To Discuss?

Connect with us and discover how our solutions can drive real results for your business.